A Brief History of the Internet and the Problems Facing Broadband in America

Are you a journalist or researcher writing about this topic?

Contact us and we'll connect you with a broadband market expert on our team who can provide insights and data to support your work.

The colossal labyrinth of pulses and wires we refer to as “the Internet” is sort of like the jumble of wires and plugs behind your uncle’s VCR. Sure, it works — but it’s largely improvised, and for the love of God don’t touch anything.

Much like that old VCR, America’s network infrastructure is often a bit dated in terms of infrastructure.

This has become increasingly clear in the past year as policy changes around Net Neutrality and regulatory standards have been riling up consumers, Internet providers, and Internet access advocacy groups alike.

Overall, one thing is clear; the US has some issues when it comes to the modern innovation it helped give birth to.

The heart of the trouble goes deeper than policy changes around how content is delivered. While it’s popular to blame providers, the underpinnings of the issues are in truth much more complex.

- The geography problem: America is huge and fiber is expensive. (connecting a building can cost anywhere from $500–$50,000 depending on distance and local regulation).

- The politics problem: US regulation is generally more relaxed than other developed countries. The regulations that do exist tend to be outdated, and companies aren’t incentivized to compete directly.

- The “First Mover” problem: America invented the Internet, and the “technology debt” of all that money sunk into now-outdated copper networks is hard to justify building over at scale. Countries like South Korea that are known for incredible Internet infrastructure have the benefit of building out their digital infrastructure from scratch on mature technologies, with all the benefit of our mistakes and hindsight.

Before diving directly into the issues (and what can be done about them), however, let’s briefly take a look at how the web you’re familiar with today came into existence, starting right at the peak of the Soviet Union’s influence.

From there, we’ll explore the nuances of the way your connection is structured and ultimately delivered to your doorstep–and why it’s a fragile system in need of change.

The Internet: A Network Built on Cold War Fears

Wikimedia

On October 4th, 1957, the Soviet Union surprised the world by launching the first man-made satellite into orbit around the Earth.

Known as Sputnik, the device didn’t have much in the way of technology onboard its beachball-sized hull, but that didn’t stop Americans from beginning to feel that they were actually falling behind in terms of technological progress. As a result of this revelation, a greater emphasis on developing and researching technological projects began to take shape at all levels of American society. From the government to the private sector, and even in the classroom, science was nudged towards the forefront of the people’s interests.

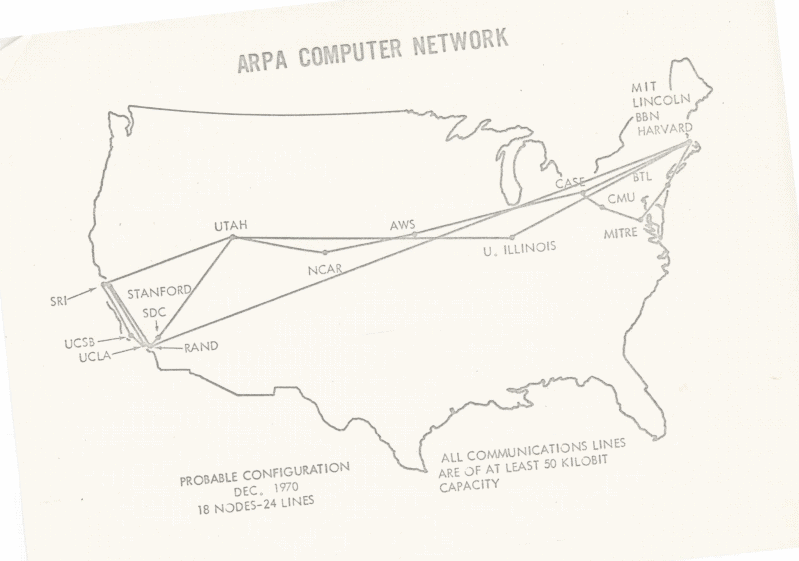

It was this renewed vigor that gave rise to the first wide-area network, called the ARPANET, which delivered its first message in 1969.

Throughout the following two decades, this initial network grew into thousands of similar connections between various points all around the globe.

Wikimedia

In 1991, however, things changed forever once again.

That year, a Swiss computer programmer named Tim Berners-Lee introduced the masses to the concept of a World Wide Web; a system of interconnected information hubs that any user could freely navigate to and interact with. Far from the simple peer-to-peer file sending capabilities of ARPAnet, Berners-Lee laid the groundwork for the all-consuming Internet we know today.

America’s Internet Report Card

At the time of this posting, the USA average download speed is 22.69 megabits per second (Mbps).

…For reference, that’s less than half the average speeds for countries like Norway, Hungary, and the Netherlands. In addition to having slower speeds than many other countries, Americans also pay more per megabit as well. For instance, new data shows that a 500 Mbps connection from an internet provider in Los Angeles runs users an average of $299 dollars per month, whereas a 1000 Mbps down speed can be had in cities like Paris, France for a mere $35 and some change.

Meanwhile, South Korea, a country that did not develop a robust network infrastructure until the late 1990’s and early 2000’s , enjoys the fastest average speeds in the world, according to data from Internet monitoring firm Akamai.

America, meanwhile, placed 14th on the list, behind countries like Belgium, Romania, and the Czech Republic. South Korea’s success in this regard isn’t entirely a fair comparison to make, as the country is both much smaller and much more densely populated than the US, allowing for shorter lines to be run, reducing costs significantly in the process.

Oleksii Khodakivskiy/Unsplash

All the same, the country serves as a stark reminder of what is possible with a concentrated effort centered around ensuring that all of its citizens have stable, fast connections to the Internet. In terms of consumer choice, things are much rosier in the lower half of the Korean peninsula as well.

Though there are still only three major providers in South Korea at the moment (KT Corp, SKBroadband, and LGU+), numerous smaller options exist that keep the country in a constant state of healthy competition, making consumers the clear winner at the end of the day.

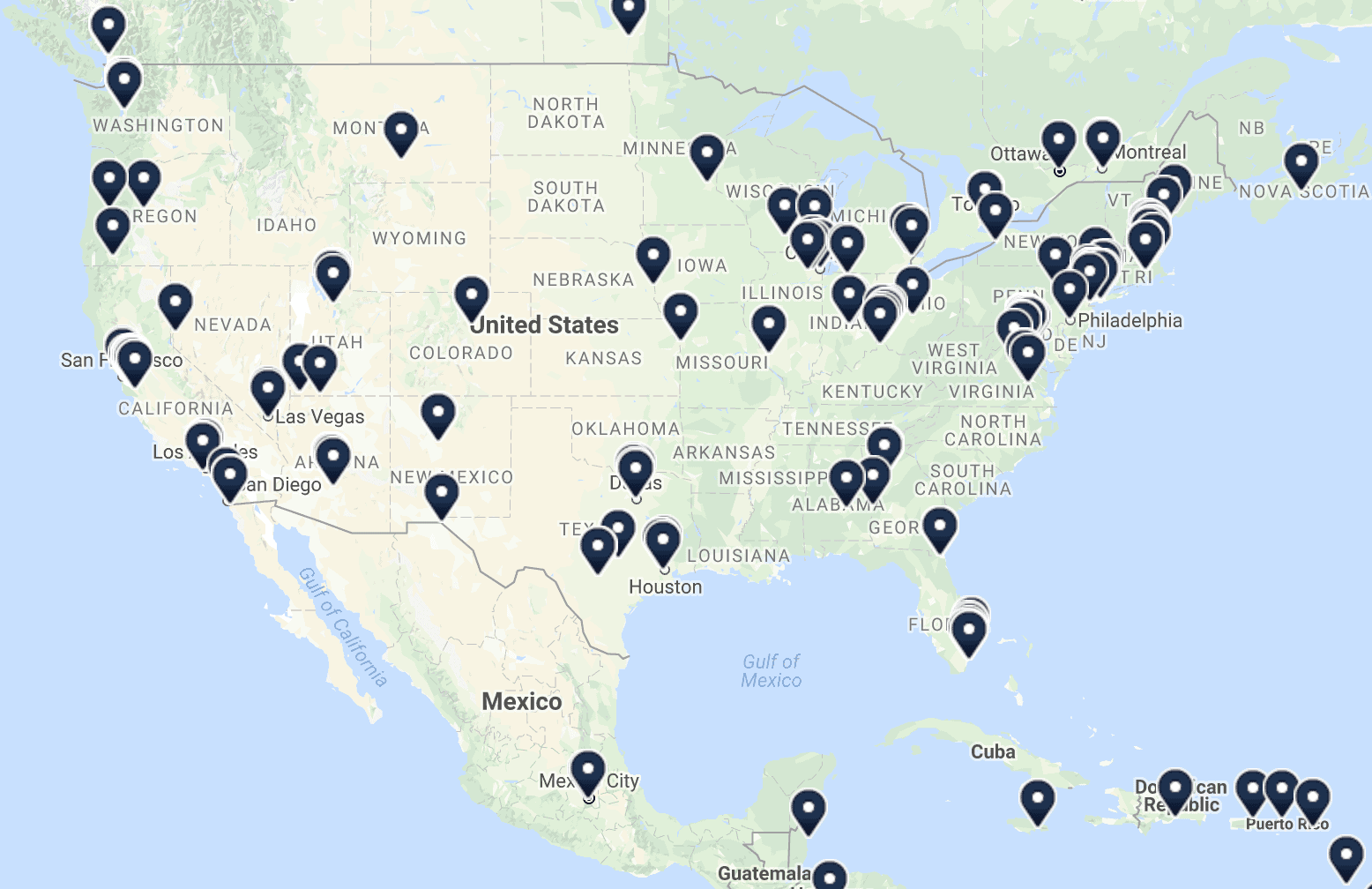

How wide, you say? Recent numbers show that as many as 50 million US households only have access to one service provider offering at least 25 Mbps download speeds – or none at all. For how much time Americans spend on their devices, we certainly don’t seem to have many options when it comes to how we get connected, or how much we pay for the ability to do so.

So, why is it that the world’s largest (and most-developed) economy has landed in such a poor position when it comes to giving users attractive options for their Internet service? The shortest answer: money. The slightly longer explanation: our last mile network infrastructure is severely lacking, and there’s very little incentive for those in power to do anything about it.

An Anatomy of America’s (Aging) Internet Infrastructure

Understanding how your devices communicate with the wider Internet is crucial to truly grasping America’s current connectivity problem, but it’s easier to comprehend than you might expect.

There are three critical “tiers” that provide the structure we use to connect to the Internet, and in order to understand why download and upload speeds are so poor in the US relative to other countries, you need to have at least a basic grasp on each of them.

Tier Three

The third tier is commonly described as the “last mile” for your connection, and it’s also the easiest to understand for most. Controlled by just a few players (Primarily Spectrum, Comcast, AT&T, and Verizon), this section involves the physical wires that run from your home or apartment to a nearby hub.

These hubs equate to central groups of routing equipment that dot the landscape in cities across America, with cables underground and above on poles that collect and organize individual connections into digital data (ones and zeros).

Tier Two

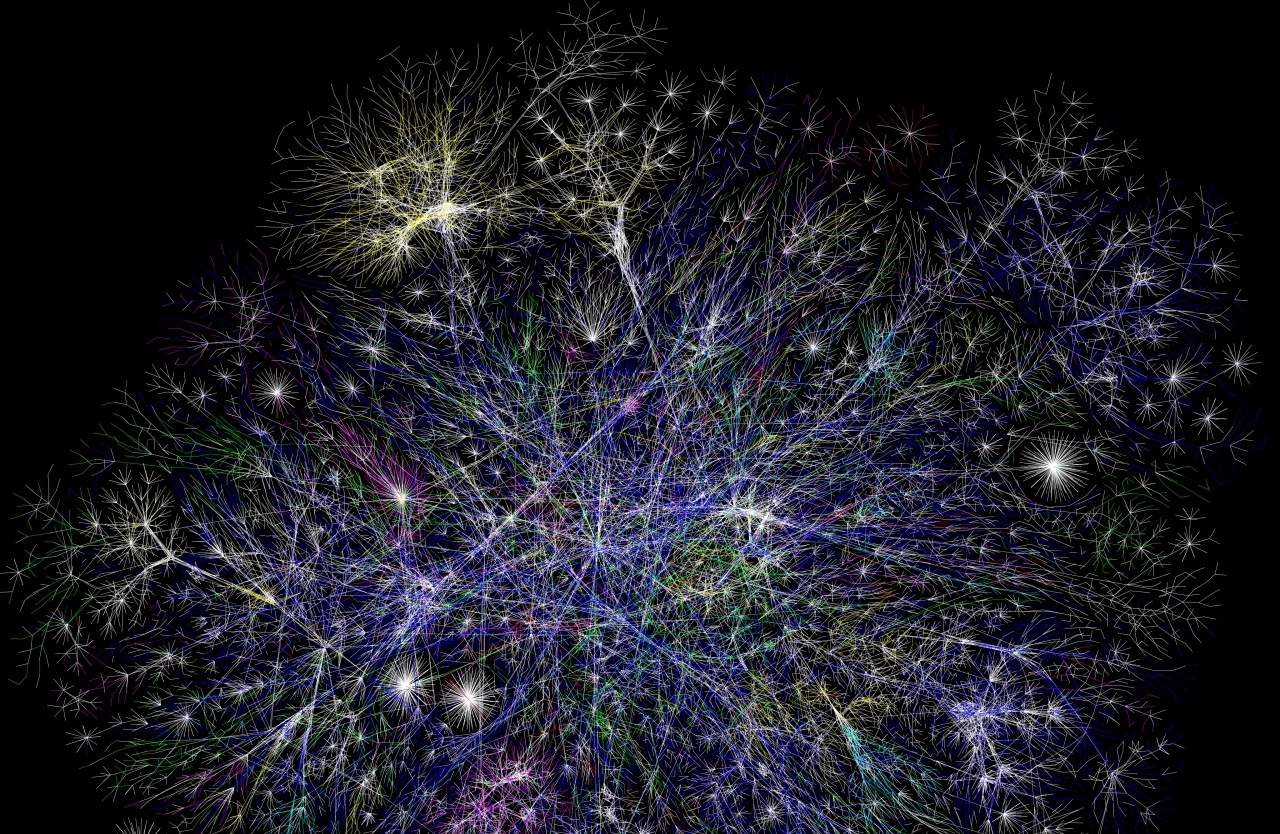

From here, your data is pooled together with those of others in your area, expanding into the second tier, where things get a bit more complex. That’s because this wider structure of the web in America is owned by many different companies, all of which have to get along in order to provide users with uninterrupted speeds.

The main idea is that tier two providers are incentivised to “peer” with larger tier one providers like Cogent, Level 3 and AT&T in order to gain access to all of the various routes connected to the wider Internet.

These peering connections happen at “Internet exchanges” distributed across the globe.

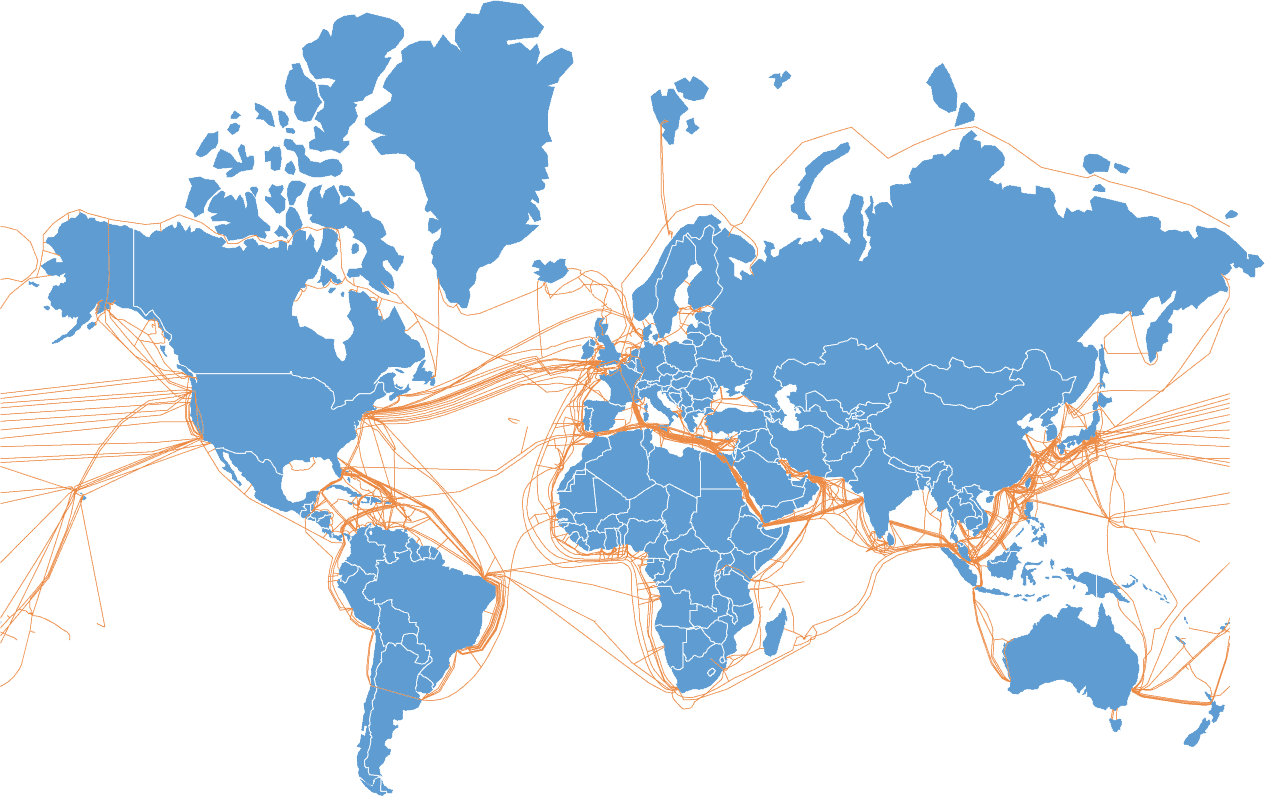

Telegeography

Now imagine that all of the middle-men owners of these connection points got along perfectly with one another. Data could move freely around the world, and we’d all live in some sort of blissful ultra-connected utopia (okay, maybe it wouldn’t be that blissful, but still).

The unfortunate reality is that these companies are constantly competing and negotiating with each other, working out complex peering deals that sometimes get held up for months in the deliberation process, slowing your data down as a result.

Tier One

The last (and largest) portion is commonly referred to as the “backbone” of the Internet. This is the globe-spanning network of cables you might have imagined when thinking to yourself about how you communicate with users all over the surface of the planet.

For the most part, this section is also controlled by heavy hitters such as Verizon and AT&T, amongst several other companies who you’ve probably never heard of.

Roadblocks To Improvement

Unsurprisingly, the largest performance slowdown happens at the last mile, which acts as a bottleneck for the rest of the wider network.

Speaking with our office’s residential Internet expert Jameson Zimmer, he described this last mile as “basically hijacking telephone and cable lines and slipping a different product into the pipes.” (Yes, we know the Internet isn’t “a series of tubes,” but it’s a helpful way to think about it.)

The few companies that own this infrastructure often operate without robust competition, which leaves the pricing power on a key communication tool at the mercy of a handful of companies who — as is normal for companies in a free market economy — have to put their shareholders first.

Savannah River Site/Flickr

To make matters more complex from a business perspective, the need to maintain expensive rural copper networks in order to keep residents connected to critical communication and 911 services has resulted in a labyrinth of maintenance requirements. This prevents many providers from allocating resources to fiber upgrades, even when they want to. Today’s top Internet speeds have long left these earlier copper technologies in the dust, with connections creeping up to gigabit (1,000 Mbps!) speeds and beyond.

This is a prime example of how being the first mover on a preeminent technology isn’t always an advantage in the long-run. The very things that make America a strong force for change in the world (a spirit of innovation and a free and open market) are actually holding us back in this regard, and the “if it ain’t broke, don’t fix it” mentality approach to rural networks certainly isn’t helping.

Simply put, it’s no surprise that ISPs don’t act like nonprofits or utility companies when it comes to improving their customer’s connectivity.

In a world where being connected is increasingly considered an integral aspect of being a productive member of society, that obviously creates a serious problem when large swathes of the population struggle to pay for speeds that are overall slower than other developed nations.

America’s Digital Future

Khara Woods/Unsplash

This is where the great net neutrality debate comes into play. WIth the FCC entangled in a complex web of interests, it’s up to those in Congress and in business alike to be proactive, thinking up and engineering solutions that will pave the way for future growth.

Until major service providers are given sufficient reason to augment and improve their aging infrastructure in America, nothing will happen. Whether this change is spurred on by clever technological innovations or more government intervention still remains to be seen, but it’s increasingly likely that we’ll need a bit of both in order to get the job done.

In the first example above, a company called Monkeybrains is beginning to offer direct, high-speed Internet access to users by utilizing quickly-evolving fixed wireless technology. By doing so, they are effectively bypassing a stretch of wires in the last mile and allowing users to pay rates as low as $35 per month (after a $250 initial installation fee) for connection speeds that rival those offered by traditional coaxial and fiber cables.

Wikimedia Commons

It isn’t just smaller entities getting in on this, however; Google has been slowly pivoting towards their fixed wireless offerings since acquiring Webpass in 2016. Of course, this only applies to those who live in cities where these companies are already operating, for the moment at least. A true networking revolution will require this kind of innovative thinking on a nationwide scale, which is something that we’ve still yet to see.

More recently, the tech giant announced that their smart-city spinoff, Sidewalk Labs, has penned a deal with the city of Toronto to turn 800 acres of land bordering Lake Ontario into an “Internet city.” Projects like this may be a tremendous boon to future city planning with robust consumer networks in mind, allowing for even greater innovation on a municipal level.

So, where do we go from here? We understand the problem, and why it’s so difficult to get around, and we also know what needs to happen in order to truly bring on the change we so desperately need.

Ultimately, America’s Internet problem doesn’t have one swift, all-encompassing fix. The only path forward relies upon improved and modernized regulations, and meaningful competition amongst the heavy hitters.

Common Proposed Solutions to US Broadband Issues

In closing, here are some further reading resources on some of the specific proposed solutions currently popular with pro-competition broadband advocates.

- Municipal bonds: A municipal bond system that would attempt to make the 30-year payoff for local fiber infrastructures much more reasonable.

- Shared wiring requirements: A system for sharing wiring in the last mile, allowing more small companies to compete on customer service and incentivizing competition to areas that historically have had none.

- Increased and updated regulation: A broad, all-encompassing overhaul of our regulatory bodies to encourage a greater rate of innovation and change. (As emphasized by Tom Wheeler, FCC Commissioner under Barack Obama.)

- Decreased and updated regulation: Continued deregulation to reduce regulatory burdens on Internet providers. (As emphasized by Ajit Pai, FCC Commissioner under Donald Trump.)